The Ad-Fraud Playbook Comes for Music: What Spotify’s Bot Crisis Tells Us About Platform Monetization in 2025

Spotify’s Bot Crisis: What It Reveals About Platform Monetization in 2025

The Spotify bot crisis began when rapper RBX filed a class-action suit against Spotify on 3 November 2025, accusing the company of allowing “billions of fraudulent streams,” it sounded like one platform losing control of its data. Yet the Spotify bot crisis is bigger than that. It’s a story about how every major digital business now earns money.

Spotify’s crisis shows that in 2025, monetization itself is the vulnerability. Platforms no longer just sell ads or subscriptions—they sell the appearance of scale. Fraud is not an accident inside this economy. It is a byproduct of how growth is defined, measured, and priced.

Advertisement

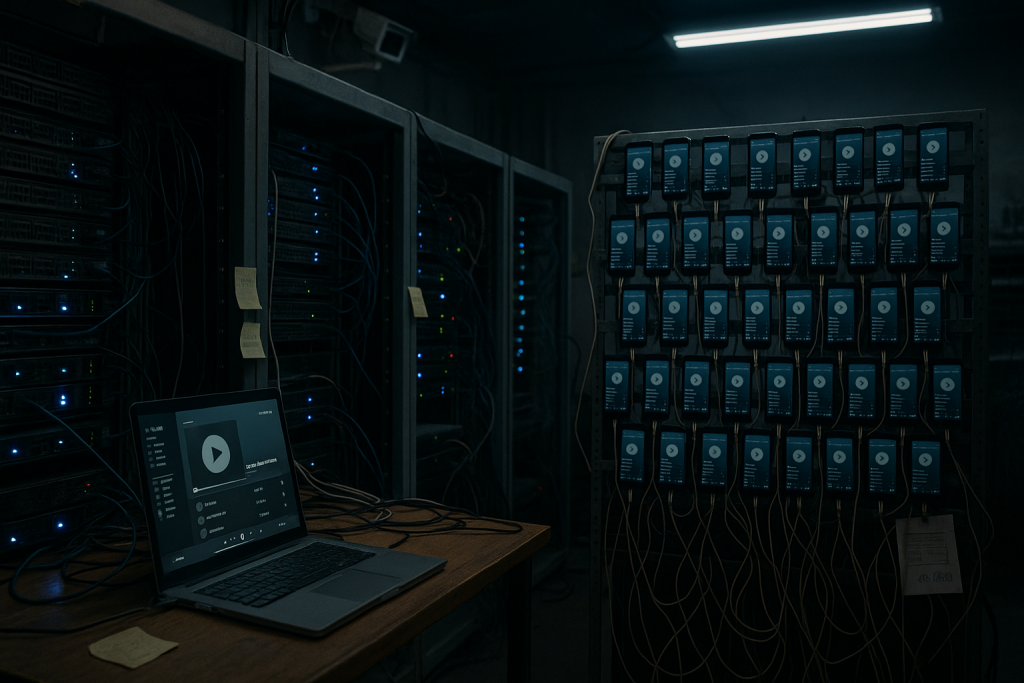

The Spotify Bot Crisis in Action

Spotify’s business model runs on motion: more users, more hours, more streams. Its pro-rata payout converts streams into royalties. Every play—real or fake—feeds the same revenue pool. As total streams grow, each play becomes worth less. That dilution is invisible to listeners and pivotal to investors, who judge the company on total volume, not payout fairness.

The RBX lawsuit argues that this setup rewards Spotify for looking the other way. More streams, even fraudulent ones, make the platform look bigger. It can show rising engagement to shareholders and sell more ad inventory to brands. Still, fraud damages trust across the board because metrics drive market value, and you cannot rely on counts that bots inflate, as outlined in Vulture’s case summary.

This trade-off defines the platform era. Verification costs money and volume earns money. When the two collide, growth wins.

Monetization by Perception

The Spotify bot crisis reflects a wider trend: monetization through perception rather than reality. On YouTube, creators chase algorithmic watch time. On TikTok, brands buy “engagement” that no one can fully verify. Meanwhile, on Meta, advertisers pay for impressions based on self-reported reach. In each case, what matters is not whether the interaction was human, but whether it can be recorded, counted, and sold.

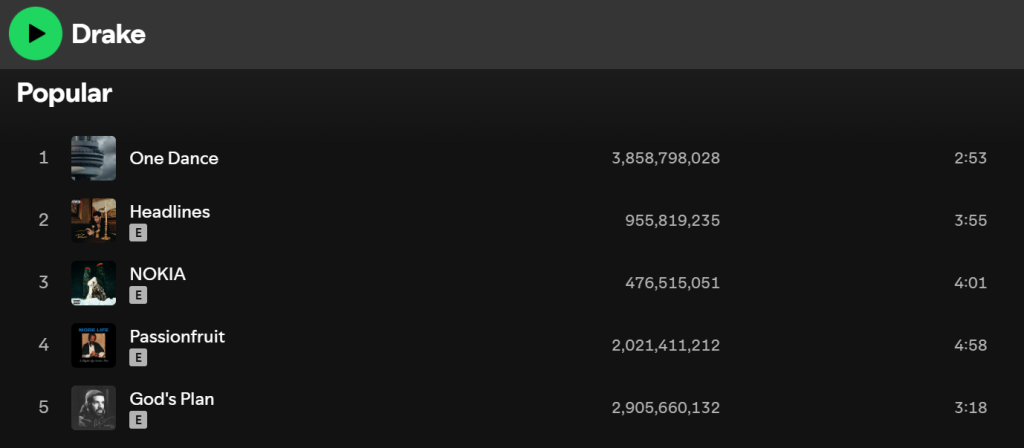

Spotify says 1.14% of streams across its catalog show signs of artificial activity. That headline pairs with public claims that policy changes would redirect money away from bad actors and toward eligible tracks. Together, those lines read like narrative management: the numbers reinforce the idea that the system is under control.

As a result, the industry now runs on performative accountability. Each quarter brings new anti-fraud policies, AI detectors, and dashboards. These tools protect confidence in the measurement, not the measurement’s accuracy.

The Ad-Tech Blueprint Behind the Spotify Bot Crisis

Streaming fraud recycles the old ad-tech stack. Bot farms, residential proxies, and “Fraud-as-a-Service” shops evolved from fake clicks and views to fake listens. Spotify became a target because its economy, like advertising, rewards activity without verifying identity, as shown in the CNM study in France.

When advertisers buy programmatic audio, they buy exposure to the same streams bots “listen” to. The only part of Spotify’s ecosystem widely verified by third parties is the ad surface, through partners such as DoubleVerify and Integral Ad Science. Ad buyers get audits, but artists and listeners get trust-based reporting. The code is similar. The incentives differ.

Growth as the Product

In 2024, Spotify added a 1,000-stream threshold and a €10 per-track penalty for artificial streaming. On paper, that looks strict. In practice, it favored large catalogs by pruning millions of low-activity uploads and nudging royalties upward. The reform trimmed visible spam and left the core model intact: reward scale, tie profit to total volume, and define the metric yourself. The data then looks cleaner while the growth curve stays intact, as reflected in Spotify’s support guidance and this overview of the €10 penalty.

This type of fix is common. Platforms remove obvious bad actors to sustain confidence while the structure that invites abuse remains. Even so, the issue is managed, not solved, because the revenue engine depends on its persistence.

You see the same logic in fintech: new “security thresholds” often stabilize cash flow and reduce risk while marketed as safety. Spotify’s changes function the same way. They automate penalties, cut low-value payouts, and tighten margins. The fraud fight fine-tunes the economy rather than changing how value is created.

Platform Monetization as Storytelling

Financial health is a story that sells certainty. When Spotify reports 713 million monthly active users for Q3 2025, investors treat it as fact. There is no independent audit of core listening metrics. Credibility rests on repetition, as its newsroom post and Variety’s coverage show.

Other sectors follow the same play. AI companies tout “tokens processed.” Social apps tout “minutes watched.” The absence of third-party verification becomes a feature. It allows flexible accounting of success.

The Spotify bot crisis strips that veneer. The most profitable platforms sell the belief that their measurements mirror reality. Fraud reveals how little that belief depends on proof. The broader market pegs streaming fraud at $2 billion a year or more, which shows the gap between reported numbers and verified ones, as noted in a Sky News interview and a WIRED investigation.

As a former media auditor, this raises a clear line: where verification stops, it creates a black-box. Auditors can validate delivery, brand safety, and campaign metrics on Spotify. However, The foundation that props up investor confidence—total users, hours, and stream counts—remains self-reported. Spotify serves as both referee and player. It defines a “stream,” polices its own fraud, and publishes figures as fact.

The Human Fallout

Automation gives Spotify an edge, but it also creates fallout. Anti-fraud sweeps often hit independent artists after a genuine viral spike. Tracks vanish overnight. Royalties disappear. Distributors then charge to re-upload the same music. What began as a protection measure turns into another way to make money.

Large catalogs rarely draw attention, so smaller artists take the blow. The pattern mirrors the rest of the digital economy—systems built to reward scale end up punishing those without it.

That gap only widens as auditing grows less precise. When verification weakens, numbers start steering outcomes. Self-reported activity gets paid; authentic performance doesn’t. Inflated metrics attract budgets, and real engagement fades into the background. Over time, repetition replaces proof. Even trust starts to feel like something for sale.

Spotify’s rules show how risk travels. When bad data hurts an advertiser, the company smooths it over with credits or refunds. When it hurts an artist, that artist has to pay to appeal. The money keeps flowing. The accountability doesn’t.

Culture Follows the Metric

If you’re not an advertiser or artist, you might not think this effects you. But it does because stream counts don’t only reflect success, they also decide it. Stream counts determine which songs hit playlists, which artists seem “viral,” and where labels put their money. A track boosted by bots can still gather real fans once it’s visible. While fake attention can turn into real popularity, it had an unfair advantage of visibility, which looks like proof of a good concept.

Why the Spotify Bot Crisis Matters Beyond Music

Since so many platforms across the web rely on likes, follows, listens, views to fuel algorithms that fight for our attention, they all wrestle with the same tensions:

Scale vs. truth: more data fuels revenue, and deep checks slow growth.

Automation vs. accountability: machine systems raise efficiency, and they blur responsibility.

Narrative vs. transparency: markets reward confident numbers more than cautious ones.

By 2025, every major tech company sits on that fault line. Ad networks learned this lesson in 2017 when Procter & Gamble pushed for transparency and third-party audits, forcing platforms to open up, as detailed in a Marketing Week interview and an Adobe recap of the five-step program.

Where the Spotify Bot Crisis Heads Next

The correction is more likely to come from advertisers than from artists or regulators. Once brands ask what share of audio impressions are fake, the demand for external verification will outweigh the benefit of secrecy.

That shift reshaped digital ads once already. When P&G drew a hard line in 2017, YouTube and Meta accepted third-party measurement. Spotify now sits at a similar point. Its bot scandal could become streaming’s P&G moment, where listening data finally faces independent checks.

Until then, fraud is punished only when someone catches it. No one can say how much inflation still sits inside the counts. Even so, the risk remains priced into the model.

The Real Lesson of 2025

The Spotify bot crisis is not only about music integrity. It is about the economics of trust. Platforms in 2025 do not earn money from content. They earn it from metrics that simulate attention. The more activity they record, the more valuable they look, even when a share of that activity is artificial.

Here is the lesson: the modern platform acts as both merchant and accountant of its own economy. It defines what counts as real, reports the results, and profits from the ambiguity. Fraud exploits the same loopholes that the business model already depends on.

The lawsuit will fade. The structure will not. As long as growth prices higher than truth, every platform will face its own version of a bot crisis. The open question for 2025 is simple and hard: can honesty be monetized?

Leave a Reply