Why AI-Generated Whitelists Are a Legal Time Bomb for Agencies

The Liability Trap: AI-Generated Inclusion Lists and Brand Safety’s Next Legal Problem

A growing number of agencies are swapping human-reviewed inclusion lists for AI-generated ones, but the legal and operational fallout hasn’t caught up yet. In interviews and industry reports, a pattern is emerging: most teams are moving faster than their contracts, insurance policies, or QA workflows were designed to support.

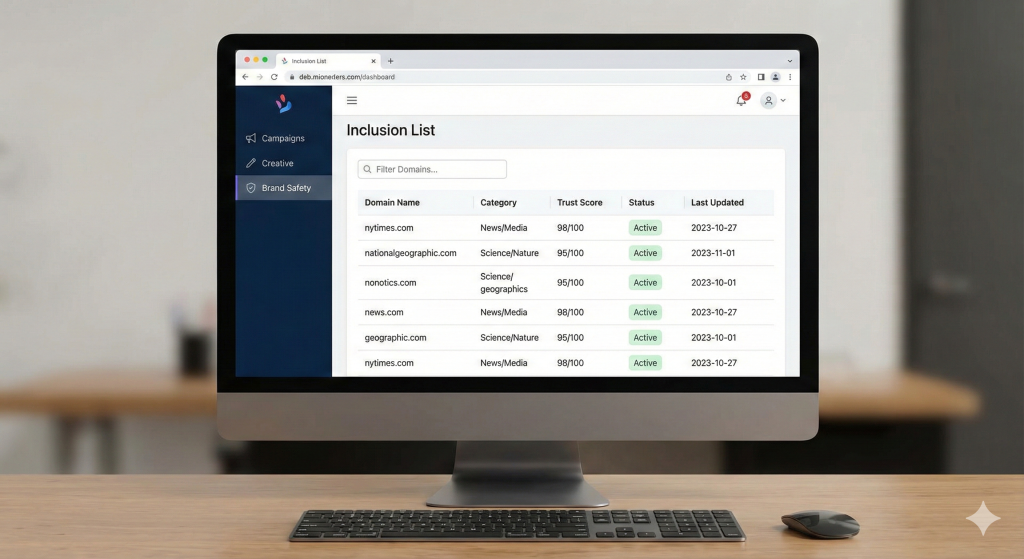

Across the industry, inclusion lists have always been positioned as the “safe” inverse of blocklists — curated inventories of publishers that supposedly reduce risk and control quality, as described in the ANA’s Programmatic Transparency Study.

Advertisement

Traditionally, building them meant hours of manual work. GroupM’s UK m-List, for example, contains roughly 6,000 domains and is reviewed quarterly by an internal committee rather than refreshed algorithmically, a process aligned with the ANA’s analysis of manual governance. That level of supervision is meticulous but costly: some agencies say list upkeep demands “hundreds of hours per year,” which makes it one of the most labor-heavy parts of ad ops, a point repeated in the ANA research.

A former analyst who worked on these reviews described the process as “the part of the job that everybody respected but nobody wanted.” They recalled opening site after site, making judgment calls based partly on metrics and partly on instinct. “You’d end up with ten tabs open by accident — you just kept clicking,” they said. “But you learned to spot the weird stuff quickly.”

That inefficiency used to act as a safeguard. Now it’s disappearing.

The New Workflow: AI Ingests, Classifies, and Approves

As programmatic spending shifts deeper into curated deals and PMPs — about 82% of spend now flows through private setups instead of the open exchange, according to the ANA transparency study — agencies are under pressure to produce larger, fresher inclusion lists without expanding headcount.

That’s where automation has slipped in.

Vendors leading this shift

The Trade Desk’s OpenSincera pulls metadata for roughly 400,000 publishers and helps layer quality signals into inclusion lists.

DoubleVerify’s suitability tools, described in its product documentation, use AI to evaluate text, imagery, audio, and video signals across environments like TikTok and CTV.

Independent agencies such as AxM Media have openly discussed using ChatGPT to identify gaps in vertical-specific lists.

Omnicom’s Assembly has piloted Microsoft Copilot to categorize publishers automatically, according to early workflow tests shared in industry discussions.

The pitch is attractive: automate the drudgery and shrink a multi-week manual task into minutes.

An agency buyer I spoke with called this “the closest thing we’ve had to an easy button.” But they also added: “Easy buttons usually hide the hard parts.”

The Economics Underneath the Shift

Manual list building has always been resource-heavy — not just because people have to look at thousands of domains, but because inclusion lists must be refreshed regularly as the web changes. The ANA transparency report notes that list curation is one of the most significant operational cost drivers in brand safety governance.

Several agencies admitted that clients started questioning why they were paying for hours of “site review labor.” Automation helped the optics. Replacing line-item labor with a tech-enabled workflow makes the process seem more scalable — and more justifiable — even if risk doesn’t disappear.

But cutting labor cost doesn’t replace the nuance humans caught, especially on borderline sites.

The Hard Part: Video and CTV

Inclusion lists were originally built for display inventory. A human could skim a page and make a judgment call. But the rise of CTV and short-form social video complicates everything.

DoubleVerify has acknowledged in its documentation that manual review is infeasible at video scale, which is why its tools analyze frames, audio tracks, and metadata rather than reviewing full clips.

The IAB Europe Brand Safety Guide reinforces this, noting that multimedia classification is foundational as video consumption accelerates.

But this is where risk quietly escalates. If a classifier approves a channel based on a few samples, and a later upload crosses a brand-safety line, the agency still has to explain how the approval happened.

A senior programmatic buyer at a major brand told me:

“It’s not that the model is wrong. It’s that it didn’t see the thing that turns into a crisis.”

Where Liability Actually Lands

This is the part agencies are least prepared for.

1. AI vendors usually disclaim responsibility

The GARM Brand Safety Framework makes clear that most liability sits with agencies or advertisers because suitability tools are advisory — not guaranteed. Many vendors limit or disclaim responsibility for misclassification.

2. Insurance policies are not designed for AI mistakes

E&O carriers have begun excluding certain AI-driven failures unless agencies opt into AI-risk riders — an issue raised frequently in insurance compliance commentary.

In other words: if an AI whitelists something harmful, the agency may be effectively uninsured.

3. MSAs rarely distinguish manual vs. automated review

Most master service agreements guarantee “brand-safe delivery” but never specify whether vetting must be human or machine-driven. That ambiguity is where disputes start.

A compliance advisor interviewed in industry analysis said:

“Assuming the AI knows best is how agencies trip on compliance.”

If human oversight is removed, and an AI model misclassifies something sensitive, the chain of responsibility becomes extremely difficult to unwind.

Auditors are Quietly Building a Backstop

Auditors have already changed how they review inclusion lists.

A standard audit now includes:

- manual review of 5–10% of AI-generated output, consistent with ANA audit recommendations

- cross-checks with NewsGuard, Comscore, IAS, and Jounce

- checks for misclassification patterns — slang, satire, MFA-style “slop sites” — outlined in Jounce’s MFA detection guidance

One analytics director said their team built Python scripts to cross-reference AI classifications with external trust scores. They also added a simple rule:

“If the two systems disagree, we don’t run the domain.”

This aligns with the IAB Europe suitability guidance, which notes that contextual AI still struggles with cultural nuance, satire, and political context — areas where human review continues to outperform automation.

The Question some in Ad Ops are asking: Was the Manual Pain a Protective Feature?

Industry materials indirectly support a theory many ad-ops veterans have voiced: slow, human-driven workflows forced analysts to notice subtle signals — layout oddities, tone inconsistencies, or publisher patterns that metrics alone didn’t reveal.

StackAdapt’s brand-safety guidance reinforces this idea, noting that AI “has limits and requires human oversight” because many contextual cues do not map cleanly to model-detectable patterns.

Humans are good at “this feels off.”

Models are good at “this matches the training data.”

Those evaluations don’t always overlap.

So what should Marketers ask their Agencies now?

AI-generated inclusion lists aren’t going away. The web is too large, and the pressure to automate too high. But the industry has outpaced its own governance — something reflected across multiple external analyses.

Here are the questions marketers should start asking:

- How was the list generated?

- Which model(s) made the decision?

- What third-party checks were run?

- Is there a human tripwire?

- Does the MSA explicitly cover AI-driven failures?

- Does insurance include AI-risk coverage?

The Bottom Line

Automation solves the volume problem, but it doesn’t solve accountability.

As inclusion lists shift from craft to code, the industry must rebuild the trust chain around them — not just the tools.

When something slips through, the advertiser isn’t going to ask whether a human or an AI classifier approved it.

They’re going to ask who is responsible.

Right now, that answer isn’t nearly as clear as it should be.

Leave a Reply